ARTIFICIAL INTELLIGENCE: BLESSING OR CURSE?

The image, according to Chat GPT, features the phrase “ARTIFICIAL INTELLIGENCE” at its centre, overlaid on a stylized depiction of a microchip and circuitry. This design symbolizes the integration of advanced AI technologies within modern electronic systems, emphasizing the critical role of AI in driving technological innovation and enhancing computational capabilities across various industries.

RADAR

USE OF ARTIFICIAL INTELLIGENCE FOR POLITICAL MANIPULATION

The AI propaganda campaign in Rwanda has been pushing pro-Kagame messages

BY MORGAN WACK | ASSISTANT RESEARCH PROFESSOR OF POLITICAL SCIENCE IN THE MEDIA FORENSICS HUB, CLEMSON UNIVERSITY

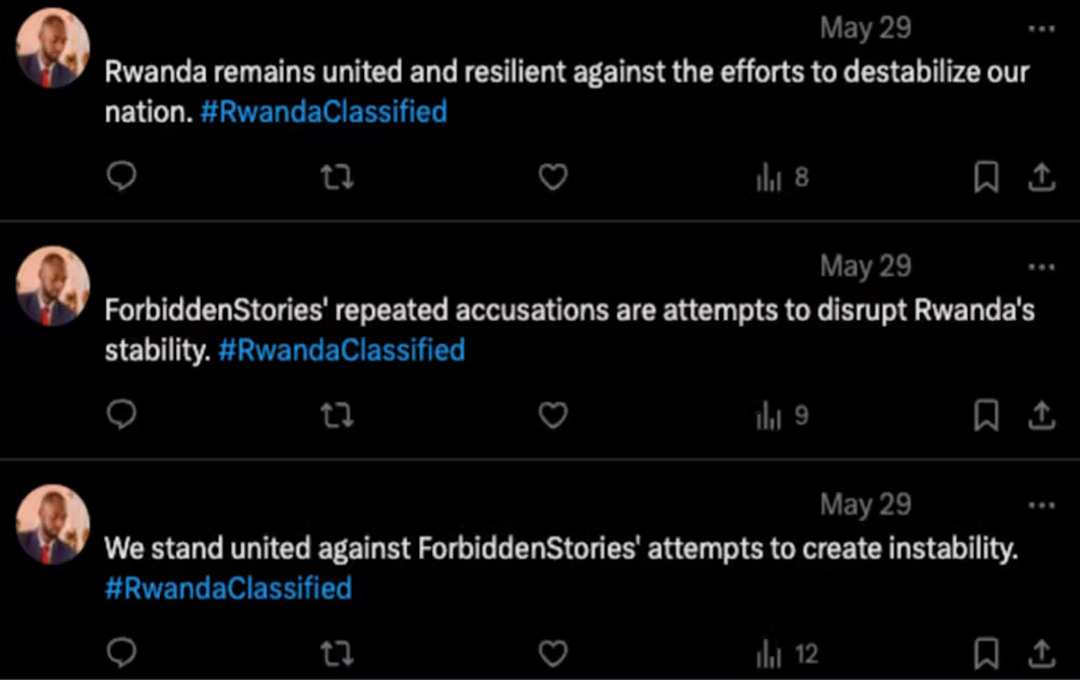

At the end of May 2024, several media outlets led by the journalist network Forbidden Stories released a set of reports titled “Rwanda Classified”. This reporting detailed evidence related to the suspicious death of Rwandan journalist and government critic John Williams Ntwali. The reports included additional details on Kigali’s efforts to silence critics.

In the Media Forensics Hub, we monitor the internet searching for evidence of coordinated influence operations. We identified at least 464 accounts that flooded online discussions with content supportive of the Paul Kagame regime following the publication of Rwanda Classified. Many of the accounts, which we linked to this network, had been active on X/Twitter since January 2024. During this time, the network produced over 650,000 messages.

Rwandans voted on 15 July 2024. The presidential result was a foregone conclusion due in no small part to the expulsion of opposition candidates, harassment of journalists, and assassination of critics. Kagame garnered over 99% of the vote. Even though the outcome was inevitable, accounts in the network were repurposed to promote Kagame’s candidacy online. The inauthentic posts will likely be used as evidence of the president’s alleged popularity and the legitimacy of the election.

In both the response to Rwanda Classified and the pro-Kagame presidential campaign, we identified the use of AI tools to disrupt online discussions and promote government narratives. The Large Language Model ChatGPT was one of the tools used.

The coordinated use of these tools is ominous. It’s a sign that the methods used to manipulate perceptions and maintain power are getting more sophisticated. Generative AI enables networks to produce a higher volume of varied content in comparison to solely human-operated campaigns. In this instance, the consistency of posting patterns and content markers made it easier to detect the network.

Influence networks

Coordinated influence operations have become commonplace in African digital spaces. Though each network is distinct, they all aim to make inauthentic content look genuine. These operations often “promote” material aligned with their interests, while they try to “demote” other discussions by flooding them with unrelated content. This appears to be the case with the network we identified.

In East Africa alone, social media platforms have removed networks of accounts created to appear legitimate, which targeted Ugandan, Tanzanian, and Ethiopian citizens with false and partisan political information. Non-state actors, including several global PR firms, have also been traced to the operation of bots and websites in South Africa and Rwanda.

Unlike prior campaigns, the pro-Kagame network we identified rarely copied text verbatim. Instead, the associated accounts used ChatGPT to create content with similar but not identical topics and targets. It then posted the content alongside a range of hashtags.

These messages were then used to flood legitimate discussions with either unrelated or pro-government content. This included information on Rwandans’ ties to sporting clubs, direct attempts to discredit reporters, and outlets involved in the Rwanda Classified investigations.

AI and propaganda

The integration of AI tools in online campaigns has the potential to alter the influence of propaganda for several reasons:

Scale and efficiency: AI tools enable the rapid production of large volumes of content. Producing that output without these tools would require more resources, people, and time.

Borderless reach: Techniques such as automated translation enable actors to influence discussions across borders. For example, the most common target of the inauthentic Rwandan network was the conflict in eastern Democratic Republic of Congo.

Network attribution: Certain patterns of behavior can indicate coordination. Generative AI tools enable the seamless creation of subtle variations in the text, which complicate attribution efforts.

What can be done?

As the primary target of most influence operations, citizens need to be prepared to manage the evolution of these efforts. Governments, NGOs, and educators should consider expanding digital literacy programs to aid in the management of digital threats.

Improved communication is also needed between operators of social media platforms, such as X/Twitter, and providers of Large Language Model services, such as OpenAI. Both play a role in allowing influence networks to flourish. When inauthentic activities can be tied to specific actors, operators should consider temporary bans or outright expulsions.

Lastly, governments should aim to raise the costs of using AI tools improperly. Without real consequences, such as restrictions on foreign aid or targeted sanctions, self-interested actors will continue to experiment with increasingly powerful AI tools with impunity.